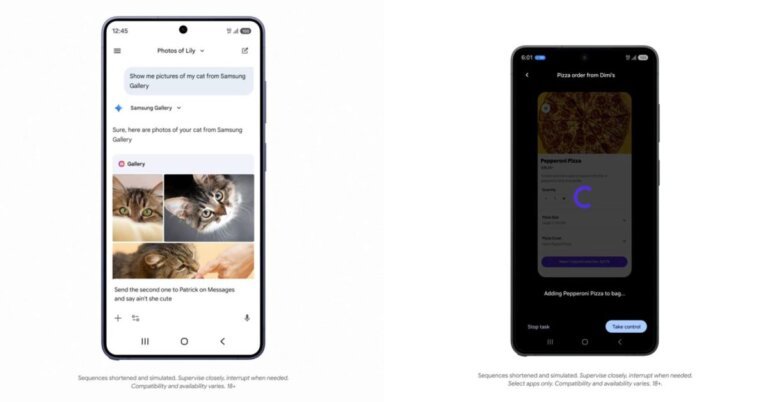

Google has introduced early beta features for Android aimed at enhancing task-centric capabilities through an "agent-first" operating system. The key component is AppFunctions, a Jetpack API that allows developers to expose self-describing capabilities within their applications for seamless interaction with AI agents while prioritizing user privacy and performance by executing tasks on-device. AppFunctions operates similarly to backend capabilities declared via MCP cloud servers but runs locally on the device. Additionally, a UI automation platform has been introduced to assist users in performing complex tasks without requiring developer input. This platform allows users to complete tasks like placing pizza orders or coordinating rideshares through the Gemini Assistant. Privacy and user control are emphasized, with all interactions designed for on-device execution and mandatory confirmations for sensitive tasks. Currently, these features are in early beta and available exclusively on the Galaxy S26 series, with plans for broader deployment in Android 17.