AWS has ended standard support for PostgreSQL 13 on its RDS platform, urging customers to upgrade to PostgreSQL 14 or later. PostgreSQL 14 introduces a new password authentication scheme (SCRAM-SHA-256) that disrupts the functionality of AWS Glue, which cannot accommodate this authentication method. Users upgrading to PostgreSQL 14 may encounter an error stating, "Authentication type 10 is not supported," affecting their data pipeline operations.

The incompatibility has been known since PostgreSQL 14's release in 2021, and the deprecation timeline for PG13 was communicated in advance. AWS Glue's connection-testing infrastructure relies on an internal driver that predates the newer authentication support, leading to failures when validating setups. Customers face three options: downgrade to a less secure password encryption, use a custom JDBC driver that disables connection testing, or rewrite ETL workflows as Python shell jobs.

Extended Support for customers who remained on PG13 is automatically enabled unless opted out during cluster creation, costing [openai_gpt model="gpt-4o-mini" prompt="Summarize the content and extract only the fact described in the text bellow. The summary shall NOT include a title, introduction and conclusion. Text: AWS PostgreSQL 13 Support Ends, Unveiling Compatibility Challenges

Earlier this month, AWS concluded standard support for PostgreSQL 13 on its RDS platform, urging customers to upgrade to PostgreSQL 14 or later to maintain a supported database environment. This transition aligns with PostgreSQL 13's community end-of-life, which occurred late last year.

PostgreSQL 14, introduced in 2021, enhances security by adopting a new password authentication scheme known as SCRAM-SHA-256. However, this upgrade inadvertently disrupts the functionality of AWS Glue, the managed ETL (extract-transform-load) service, which is unable to accommodate the new authentication method. Consequently, users who heed AWS's security recommendations may find themselves facing an error message stating, "Authentication type 10 is not supported," effectively halting their data pipeline operations.

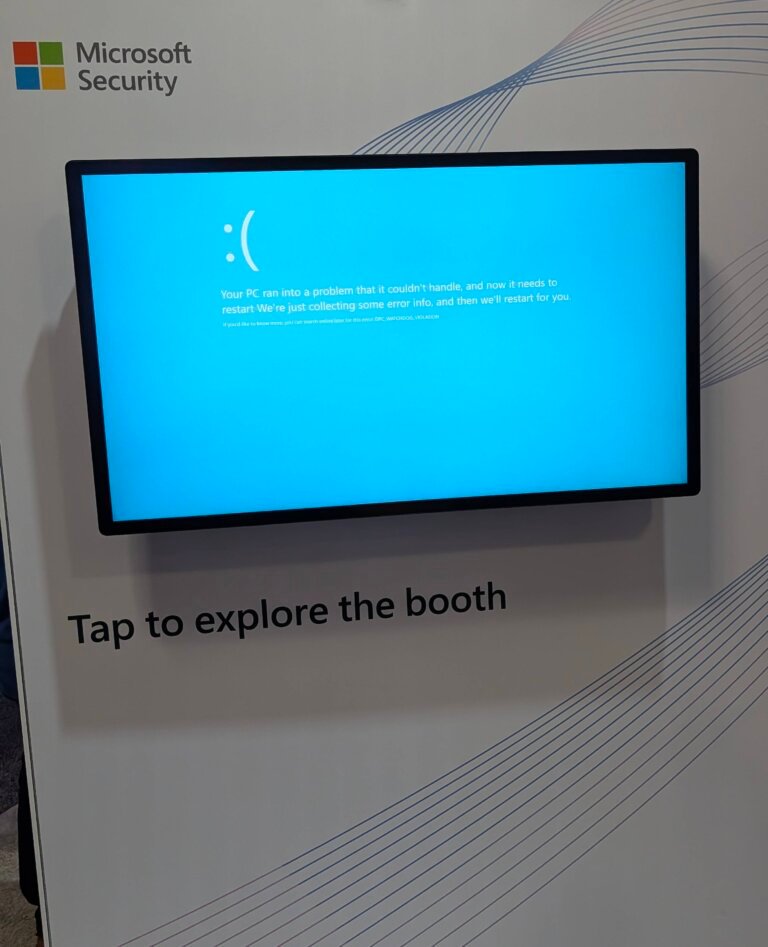

This situation is particularly concerning as both RDS and Glue are typically utilized within production environments, where reliability is paramount. The deprecation of PostgreSQL 13 did not create this issue; rather, it eliminated the option to bypass a long-standing problem that has persisted for five years. Customers now face a dilemma: either accept an increased maintenance burden or incur costs associated with Extended Support.

The crux of the matter lies in the connection-testing infrastructure of AWS Glue, which relies on an internal driver that predates the newer authentication support. When users click the "Test Connection" button to validate their setup, it fails to function as intended. A community expert on AWS's support forum acknowledged three years ago that an upgrade to the driver was pending, assuring users that crawlers would operate correctly. However, reports have surfaced indicating that crawlers also encounter issues, further complicating the situation.

This incompatibility has been acknowledged since PostgreSQL 14's release, and the deprecation timeline for PG13 was communicated in advance. Both the RDS and Glue teams are likely aware of industry developments, yet it appears that neither team monitored the implications of their respective updates on one another.

The underlying reason for this disconnect is rooted in AWS's organizational structure, which comprises tens of thousands of engineers divided into numerous semi-autonomous service teams. Each team operates independently, with the RDS team focusing on lifecycle deprecations and the Glue team managing driver dependencies. Unfortunately, this division of responsibilities has resulted in a lack of ownership over the gap between the two services, leaving customers to confront the consequences in their production environments.

This scenario is not indicative of malice or a deliberate revenue enhancement strategy; instead, it reflects the challenges posed by organizational complexity. Integration testing across service boundaries is inherently difficult, particularly when those boundaries span multiple billion-dollar businesses under the same corporate umbrella. The unfortunate outcome is that customers are left to grapple with the fallout of these misalignments.

For those facing a broken pipeline in the early hours of the morning, the rationale behind the incompatibility becomes irrelevant. The pressing need is for a solution, and AWS has presented three options, none of which are particularly appealing:

Downgrade the password encryption on your database to the older, less secure standard, which contradicts AWS's own security guidance.

Utilize a custom JDBC driver, which disables connection testing and may not support all desired features.

Reconstruct ETL workflows as Python shell jobs, effectively abandoning the benefits of a managed service.

For customers who opted to remain on PG13 to avoid this specific issue, Extended Support is now automatically enabled unless explicitly opted out during cluster creation—a detail that can easily be overlooked. This support incurs a fee of [cyberseo_openai model="gpt-4o-mini" prompt="Rewrite a news story for a technical publication, in a calm style with creativity and flair based on text below, making sure it reads like human-written text in a natural way. The article shall NOT include a title, introduction and conclusion. The article shall NOT start from a title. Response language English. Generate HTML-formatted content using tag for a sub-heading. You can use only , , , , and HTML tags if necessary. Text: Earlier this month, AWS ended standard support for PostgreSQL 13 on RDS. Customers who want to stay on a supported database — as AWS is actively encouraging them to do — need to upgrade to PostgreSQL 14 or later.

This makes sense, as PostgreSQL (pronounced POST-gruh-SQUEAL if, like me, you want to annoy the living hell out of everyone within earshot) 13 reached its community end of life late last year.

PostgreSQL 14, which shipped in 2021, defaults to a more secure password authentication scheme (SCRAM-SHA-256, for any nerds that have read this far without diving for their keyboards to correct my previous parenthetical). It also just so happens to break AWS Glue, their managed ETL (extract-transform-load) service, which cannot handle that authentication scheme. If you upgrade your RDS database to follow AWS's own security guidance, AWS's own data pipeline tooling responds with "Authentication type 10 is not supported" and stops working.

Given that both of these services tend to hang out in the environment that most companies call "production," this is not terrific!

The deprecation didn't create this problem. It just removed the ability to avoid a problem that has existed for five years, unless you take on an additional maintenance burden or pay the Extended Support tax.

Here's the technical shape of the Catch-22, stripped to what matters: when you move to a newer PostgreSQL on RDS, Glue's connection-testing infrastructure uses an internal driver that predates the newer authentication support. The "Test Connection" button — the thing you'd click to verify that your setup works before trusting it with production data — simply doesn't. A community expert on AWS's support forum acknowledged three years ago that "the tester is pending a driver upgrade," and assured users that crawlers use their own drivers and should work fine. Users in the same thread reported back that the crawlers also fail. Running Glue against RDS PostgreSQL is a bread-and-butter data engineering pattern, not an edge case — this is a well-paved path that AWS has let fall into disrepair.

The incompatibility has been known since PostgreSQL 14 shipped in 2021. The deprecation timeline for PG13 was announced in advance. Both teams—RDS and Glue—presumably track industry developments. Neither, apparently, bothered to track each other.

The charitable read on how this happens is also the correct one: AWS has tens of thousands of engineers organized into hundreds of semi-autonomous service teams. The RDS team ships deprecations on the RDS lifecycle, the Glue team maintains driver dependencies on the Glue roadmap, and nobody explicitly owns the gap between them. The customer discovers the incompatibility in production, usually at an inconvenient hour.

This is not a conspiracy, as AWS lacks the internal cohesion needed to pull one of those off. This is also not a carefully-constructed revenue-enhancement mechanism, because the Extended Support revenue is almost certainly a rounding error on AWS's balance sheet compared to the customer ill-will it generates. Instead, this is simply organizational complexity doing what organizational complexity does. It's the same reason your company's internal tools don't talk to each other; AWS is just doing it at a scale where the blast radius is someone else's production database. Integration testing across service boundaries is genuinely hard when those boundaries span multiple billion-dollar businesses that happen to share a parent company. Nobody woke up and decided to break Glue. It came that way from the factory.

I want to be clear that I genuinely believe this, because the alternative I'm about to describe isn't about intent.

The problem with the charitable read is that it doesn't matter

If you're staring at a broken pipeline in your environment at 2 am, the reason is academic. You need a fix. AWS has provided three of them, and they all suck. You can downgrade password encryption on your database to the older, less secure standard: the one you just upgraded away from, per AWS's own recommendations. You can bring your own JDBC driver, which disables connection testing and may not support all the features you want. Or you can rewrite your ETL workflows as Python shell jobs.

Every exit means giving up the entire value proposition of a managed service — presumably why you're in this mess to begin with — or walking back the security improvement you were just told to make.

For customers who stayed on PG13 to avoid this specific problem, Extended Support is now running automatically unless you opted out at cluster creation time—a detail that's easy to miss. That's $0.10 per vCPU-hour for the first two years, doubling in year three. A 16-vCPU Multi-AZ instance works out to nearly $30,000 per year in Extended Support fees alone. It's not a shakedown. But it is a number that appears on a bill, from a company that also controls the timeline for fixing the problem, and all of the customer response options are bad.

AWS doesn't need to be running a shakedown. They just need to be large enough that the result is indistinguishable from one.

This pattern isn't unique to AWS, and it isn't going away. Every major cloud provider – indeed, every major technology provider – is a portfolio of semi-autonomous teams whose roadmaps occasionally collide in their customers' environments. It will happen again, with different services and different authentication protocols and different billing line items. The question isn't whether the org chart will produce another gap like this. It will. The question is what happens after the gap appears: does the response look like accountability — acknowledging the incompatibility before the deprecation deadline, not after — or does it look like a shrug and three paid alternatives?

Never attribute to malice what can be adequately explained by one very large org chart. Just don't forget to check the invoice. ®" temperature="0.3" top_p="1.0" best_of="1" presence_penalty="0.1" ].10 per vCPU-hour for the first two years, doubling in the third year. For instance, a 16-vCPU Multi-AZ instance could result in nearly ,000 annually in Extended Support fees alone. While this may not be a deliberate exploitation of customers, it does present a significant financial burden, especially given that AWS controls the timeline for resolving the underlying problem.

This pattern of organizational dissonance is not unique to AWS; it is a common occurrence among major cloud providers and technology companies alike. Each operates as a collection of semi-autonomous teams, leading to potential conflicts that can manifest in customer environments. The future will likely see similar gaps arise, characterized by different services, authentication protocols, and billing implications. The critical question remains: how will these organizations respond once such gaps are identified? Will they demonstrate accountability by acknowledging incompatibilities before deprecation deadlines, or will they offer a shrug accompanied by three costly alternatives?

In navigating this complex landscape, it is essential to remember that the challenges posed by large organizational structures can often lead to unintended consequences. As customers, vigilance regarding invoices and service compatibility is paramount." max_tokens="3500" temperature="0.3" top_p="1.0" best_of="1" presence_penalty="0.1" frequency_penalty="frequency_penalty"].10 per vCPU-hour for the first two years and doubling in the third year. This situation reflects the challenges posed by AWS's organizational complexity, where independent teams may not effectively coordinate updates, leading to customer difficulties.