Efforts to merge storage roles into a single solution are ongoing, particularly with Amazon S3's durability and cost-effectiveness. In PostgreSQL, achieving a durable commit requires flushing the Write-Ahead Log (WAL) before signaling transaction completion, which can take tens of microseconds on high-performance NVMe drives but extend to milliseconds on slower storage. This latency impacts Online Transaction Processing (OLTP) systems and user response times. Benchmark studies show that systems with faster local storage outperform those with slower alternatives as workloads exceed memory capacity.

The fsync operation in PostgreSQL is a commitment rather than a simple write, with enterprise-grade SSDs performing better due to power-loss protection. Read operations also face challenges, as PostgreSQL's need for small, latency-sensitive reads conflicts with S3's design for larger, higher-latency requests. As the working set exceeds memory, storage latency becomes a critical performance factor.

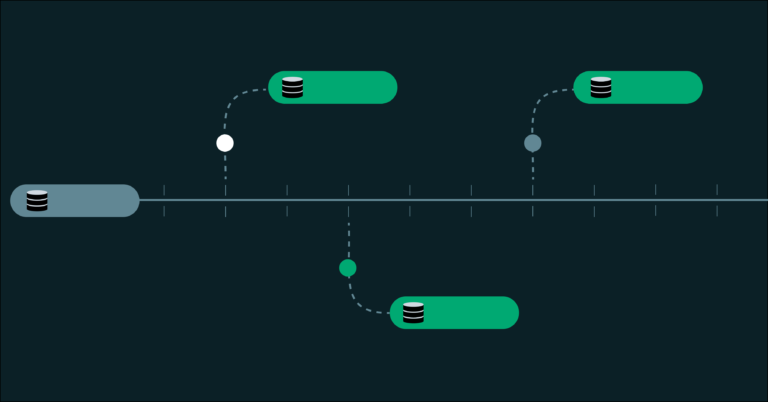

Modern managed PostgreSQL systems typically do not place object storage in the critical commit path, instead maintaining a fast log or cache close to the database while relegating colder data to remote storage. Recent PostgreSQL developments, such as asynchronous I/O support in version 18, aim to leverage fast storage more effectively. S3 is valuable for tasks like WAL archiving and backups, but these should be kept separate from the commit path to avoid resource contention.

The solution involves using both NVMe and S3, with fast storage managing commits and cache misses, while object storage handles archives and backups. PostgreSQL performs best when hot and cold storage functions are clearly delineated.