In a proactive move to enhance user safety, Meta has unveiled a new feature for WhatsApp aimed at pre-teens, introducing parent-linked accounts designed to provide guardians with greater control over their children’s messaging and calling capabilities. This initiative aligns with a growing global emphasis on children’s safety legislation, prompting social media platforms to adopt more robust protective measures for younger users.

Enhanced Parental Controls

The newly implemented parent-managed accounts on WhatsApp will require parents to link their own devices with the phones they have purchased for their pre-teens. This dual-device setup allows parents to oversee their child’s account, determining who can reach out and which groups they may join. As Meta outlines, “Once set up, these accounts are controlled by the parent or guardian who will be able to decide who can contact the account and which groups they can join.”

Additionally, parents will have the ability to review message requests from unknown contacts and adjust privacy settings, all secured by a parent PIN on the managed device. This feature aims to foster a safer digital environment for younger users, ensuring that they can communicate without compromising their security.

Addressing Safety Concerns

Meta’s introduction of these safeguards comes amid scrutiny over its commitment to child safety, particularly in light of ongoing legal challenges in New Mexico. Allegations have surfaced suggesting that the company prioritized profits and user engagement over the well-being of younger users. In response to these concerns, Meta has also paused interactions between its AI companions and teenagers, following revelations that its chatbots were permitted to engage in flirtatious conversations with minors.

New Scam-Detection Tools

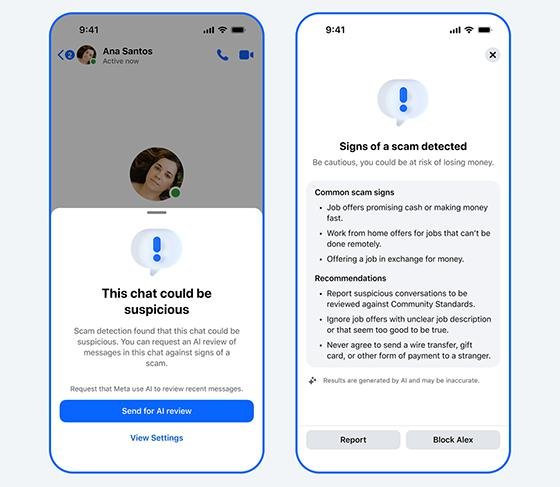

In tandem with the rollout of parent-linked accounts, Meta is enhancing its scam-detection capabilities across its platforms, including Facebook, WhatsApp, and Messenger. The company is testing new tools designed to alert users to potential scams before they engage with suspicious content. A select group of users will start receiving alerts tailored to the specific app they are using. For instance, Facebook users will be warned about dubious friend requests, while WhatsApp users will receive notifications regarding device-linking attempts, a common tactic employed by scammers.

Moreover, Messenger is set to expand its advanced scam-detection technology to additional countries, utilizing AI to conduct thorough reviews of potential scams. This initiative encourages users to take action by blocking or reporting accounts that appear suspicious, further reinforcing Meta’s commitment to user safety across its platforms.