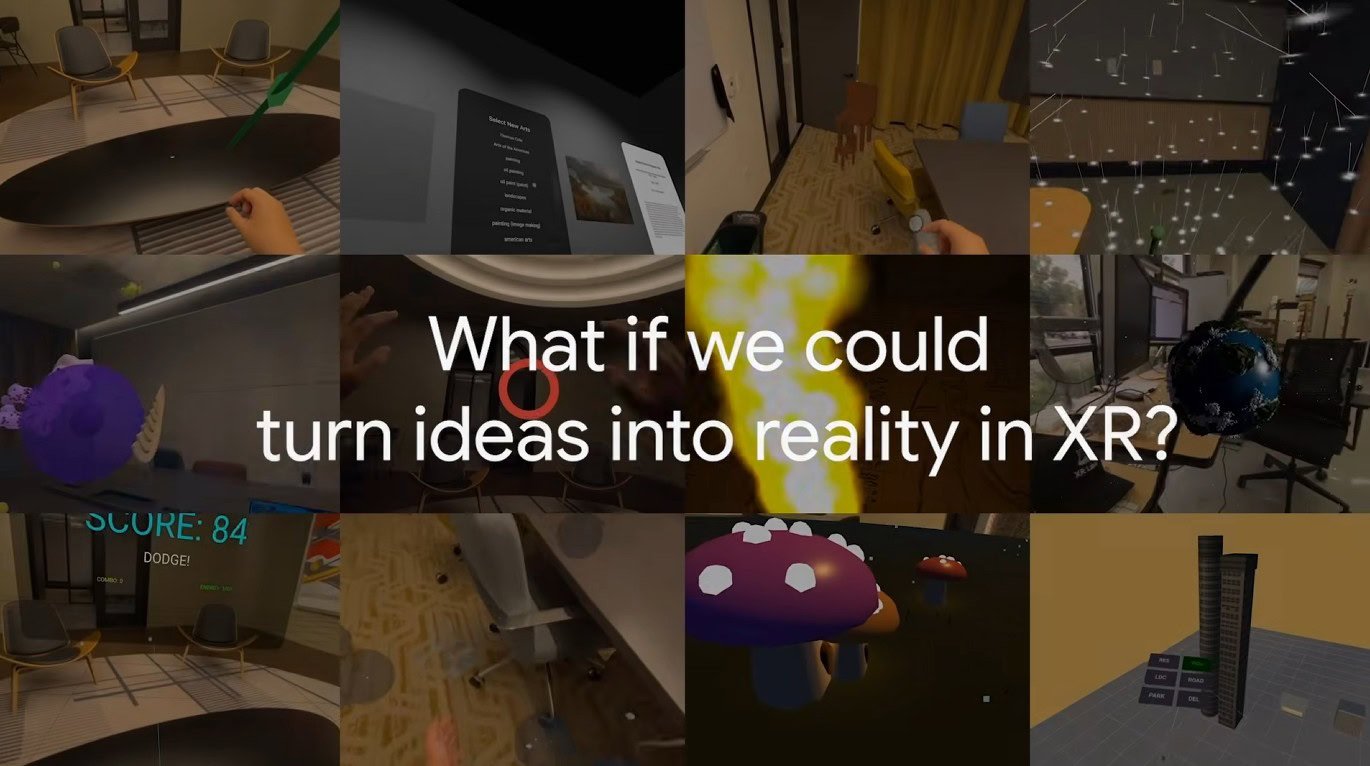

Building a virtual reality (VR) application has long been a daunting task, often requiring extensive knowledge of game engines like Unity, a solid understanding of 3D physics, and countless hours spent debugging. However, Google is now introducing a transformative approach with Vibe Coding XR, an experimental workflow that enables users to create fully functional spatial experiences from simple text prompts in under a minute.

This innovative concept is part of a burgeoning trend known as “vibe coding.” Here, artificial intelligence interprets human intent rather than relying solely on rigid lines of code to produce operational software. By leveraging the capabilities of Gemini AI models alongside the open-source framework XR Blocks, users can effortlessly describe a scene and witness it materialize in real time.

Vibe coding hits VR: How Google is making XR development instant

The underlying technology is not merely about generating code. According to Google Research, the system is built upon XR Blocks, which can be likened to advanced LEGO pieces—pre-constructed modules designed for physics, hand interactions, and spatial user interfaces. Instead of scripting the mechanics of a bouncing ball, Gemini assembles the appropriate blocks based on the user’s described “vibe.”

In practical applications, the results have proven to be impressively varied. Users have successfully generated scenarios ranging from a physics lab where they can balance scales to an interactive 3D rendition of the classic Chrome Dino game. One particularly intricate example is a Schrödinger’s Cat simulation, where a simple “pinch” gesture allows users to engage with quantum states within a mixed-reality environment.

A new playground for Android XR

While the technology is undeniably impressive, it currently operates within a specific ecosystem. Vibe Coding XR is tailored for Android XR, with the most seamless experience available on the Samsung Galaxy XR headset. Nevertheless, Google has ensured inclusivity by providing a “simulated reality” environment for desktop Chrome, enabling developers to test their “vibe-coded” creations without the immediate need for a headset.

Interestingly, internal evaluations at Google, utilizing a dataset known as VCXR60, indicate that while the rapid Gemini Flash model can produce a prototype in as little as 20 seconds, the Gemini Pro model is favored for more complex tasks. The Pro variant excels in adhering to intricate instructions and minimizing “hallucinations”—instances where the AI generates non-existent code.

A tool assistant, not a replacement for developers

It is crucial to set realistic expectations regarding Vibe Coding XR; it is not intended to supplant professional developers. The technology, on its own, is unlikely to create the next blockbuster VR game. Rather, it functions as a high-speed prototyping tool, empowering educators to craft instant 3D diagrams and allowing developers to validate user interface concepts in mere minutes instead of days. Additionally, it serves as a valuable assistant for XR software developers.

Google is poised to showcase Vibe Coding XR at the ACM CHI 2026 conference, scheduled from April 13 to April 17.