As the Gemini season unfolds, Google is unveiling an array of innovative features designed to enhance the user experience on Android devices. During its pre-I/O showcase, the tech giant introduced a suite of capabilities under the banner of Gemini Intelligence, which promises to empower smartphones to operate with greater autonomy.

Enhancing User Experience with Gemini Intelligence

Ben Greenwood, Google’s director of Android experiences, highlighted that Gemini Intelligence encapsulates the most advanced functionalities of Gemini, particularly tailored for premium Android devices, such as the upcoming Galaxy S26 series. This initiative is part of a broader strategy to integrate smart technology into everyday tasks, allowing users to delegate certain responsibilities to their devices.

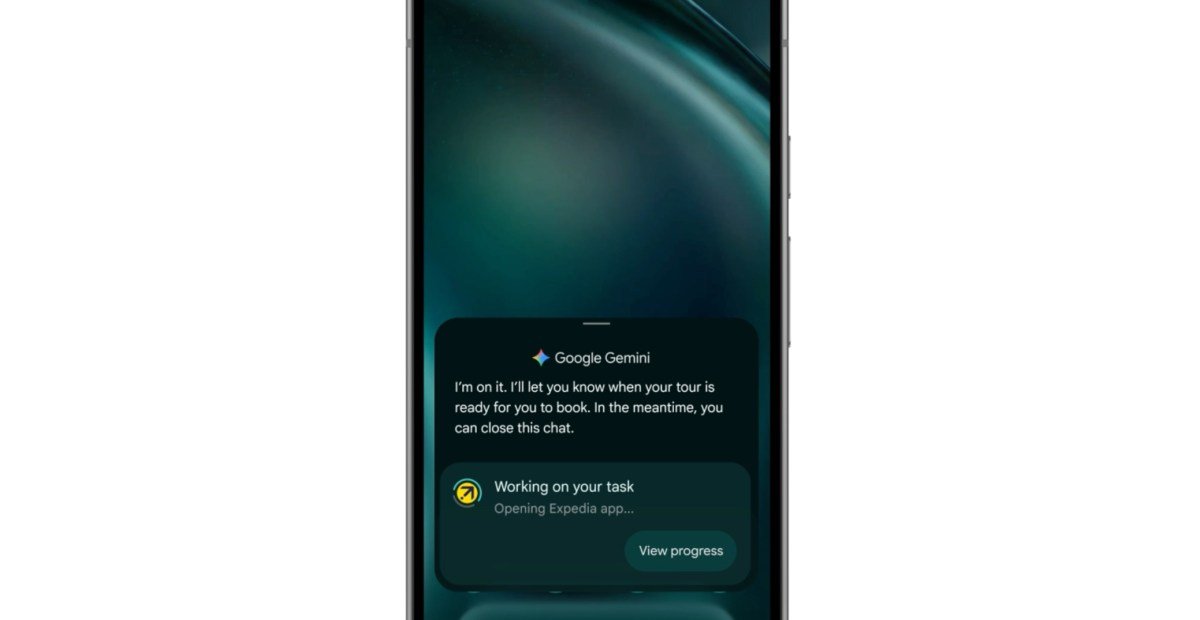

One of the standout features is task automation, which has already been implemented in select Pixel and Samsung Galaxy models. Initially limited to specific rideshare and food delivery applications, Google plans to expand this functionality to a wider array of apps in the near future. This enhancement will enable Gemini to manage tasks on behalf of users, streamlining daily activities.

Another exciting development is the introduction of multimodality. Previously restricted to voice or text commands, Gemini can now interpret visual inputs as well. For instance, users can capture a screenshot of their grocery list, and Gemini will seamlessly add those items to their shopping cart, provided they own a compatible Android device.

Custom Widgets and Generative UI

Among the novel features is “Create My Widget,” described by Google as a pioneering step towards generative user interfaces. This functionality allows users to articulate their desired widget features in natural language, enabling AI to generate a custom widget tailored to their needs. Examples include a personalized weather widget for cyclists or a recipe suggestion dashboard that curates high-protein meal prep ideas.

This concept transforms widgets into dynamic mini-apps that can be intuitively designed and placed on the home screen, hinting at a future where interfaces could adapt and evolve in real-time. As anticipation builds, many are eager to see further developments regarding generative UI during the upcoming I/O keynote.

Integration with Chrome and Autofill Features

In addition to these advancements, Google is bringing Gemini features from the desktop version of Chrome to its mobile app. Users will soon notice a Gemini button within Chrome, allowing them to share webpage content and pose questions directly in the browser. Subscribers to Google’s AI Pro or Ultra plans will also benefit from an auto-browse feature, designed to assist with tasks such as appointment bookings, set to roll out by late June.

Moreover, Gemini will enhance the autofill experience on Android, offering users the option to connect it for more efficient form completion. This integration could potentially allow Gemini to pull relevant information from Google Photos and Gmail, making the process of filling out forms more intuitive—though it raises questions about privacy and data usage.

As the rollout of Gemini Intelligence progresses throughout the year, Galaxy and Pixel phones will be the first to receive these updates, marking a significant step forward in the evolution of smartphone capabilities.